It’s easy to throw audio production terms around without totally understanding what they refer to. So, we’ve chosen a short list of important concepts for beginning or professional producers to share with you and help you get quickly acquainted with them. If you plan on interning or working in a studio, you’ll have to be very familiar with these terms. We also encourage you to investigate deeper if you want to learn more than just a quick overview.

Sound

Sound refers to vibrations that propagate as a typically audible mechanical pressure waves of displacement, through a medium such as air or water. It can also refer to the reception or perception of these waves, by the brain for instance. Sound is caused by the simple but rapid mechanical vibrations of various elastic bodies (like our ear/ its parts). These when moved or struck so as to vibrate, communicate the same kind of vibrations to the auditory nerve of the ear, and are then appreciated by the mind.

Sound waves travel about one million times more slowly than light waves.

Here’s the math for this- 1130 x 1,000,000 = 1,130,000,000 = 869,097.33 (times slower)

The higher frequency of sound, the shorter the wavelength. For sound waves in air, the speed of sound is 343 m/s (at room temperature and atmospheric pressure). The wavelengths of sound frequencies audible to the human ear (20 Hz–20 kHz) are thus between approximately 17 m and 17 mm, respectively.

Direct Sound refers to the sound-producing hearth of a sound. It is the source of the sound/noise from which the vibrations are sent out from.

Early Reflections refer to the first reflections of sound waves relative to the source of the sound. An example of this are the walls of a mixing studio. The first reflected waves are clean, but they degrade and interfere with one another as they disperse and lose energy.

Frequency

Frequency refers to the number of occurrences of a repeating event per unit time. In the case of sound, it’s the property that most determines pitch. A person with excellent hearing can hear (typically) frequencies between 20 Hz and 20 kHz. Sound above 20 kHz is called ultrasound and sound below 20 Hz is called infrasound. Some animals are able to hear these frequencies, perhaps a reason why pets are often traumatized by fireworks or loud noises.

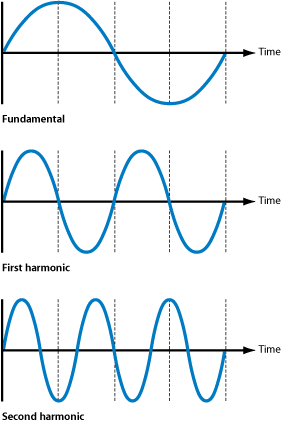

The lowest frequency of any vibrating object is called the fundamental frequency. The fundamental frequency usually provides the sound with its strongest audible pitch reference – it is the predominant frequency in any complex waveform. A harmonic is one of an ascending series of sonic components that sound above the audible fundamental frequency. The higher frequency harmonics that sound above the fundamental make up the harmonic spectrum of the sound. Harmonics can be difficult to perceive distinctly as single components, nevertheless they are there. Harmonics are positive integer multiples of the fundamental (if it’s the natural harmonic series).

cred: Apple.com

Decibel

Decibels (written as dB) are commonly used to measure sound level, but are also widely used in electronics, signals and communication. The dB is a logarithmic way of describing a ratio. The ratio may be power, sound pressure, voltage, or intensity, for example. Humans can (typically) hear sounds between 0 and 120-140 dB.

Microphone Preamplifier

Microphone Preamplifiers (“preamps”) boost the power/signal of a microphone signal in a way that makes the practically inaudible signal, audible, without uncontrollable noise. (apprx. 1000 times the un-amplified signal)

Microphone Level

Microphone Level is the lowest level of signal that is commonly used. It requires a preamplifier to bring the signal up to a reasonable level (see line level).

Line Level

Line Level is the highest common signal (besides speaker level) that is used in the audio world. There are two standardized types, -10dB (consumer) and +4dB (professional) which are named for their power output.

Talkback

The four sections off a mixing console are as follows- Input (audio sources- e.g. instruments, microphones), Output (audio outputs), Communication (talkback/slate), and Monitor (listening). Talkback provides the engineer/producer effective communication between a speaker and microphone system. The conversation is monitored in the recording room/space and studio, allowing the musical activity participants to coordinate elements and ideas before and after a session, without moving from room to room.

Slate

Slate, while similar to talkback, records necessary audio information within the actual takes. It comes from the history of movie production where scenes and takes were written on a slate board that was shown at the beginning of filming. Slating in the audio world works very similarly, allowing the artist/producer/engineer to quickly hear lots of relevant information regarding a project without reading or writing unnecessarily.

cred: theblackandblue.com

Echo

Echo is a series of short delayed copies of a sound which when mixed with the original sound, creates an audio effect. It can be subtle or pronounced depending on the length/quality of the echo, as well as its volume.

Phase

Phase has two different, but closely related, meanings. One is the initial angle of a sinusoid (or wave) at its origin and is sometimes called phase offset or phase difference. Another consideration is the (phase) relationship of multiple waves. Simply put, it’s a time relationship of sounds! Consideration of phase is incredibly important because as sound waves of different frequencies reach different microphones at different times, the potential for one microphone’s diaphragm to receive a positive phase while another receives a negative is greatly increased, and the relationship between all of these different waves’ phases can be somewhat unpredictable. The more microphones in play, the more inevitable some sort of phase issues become.

Stay tuned for more advanced lessons about audio production in the near future. What production concepts do you want us to cover next? Let us know in the comments below!

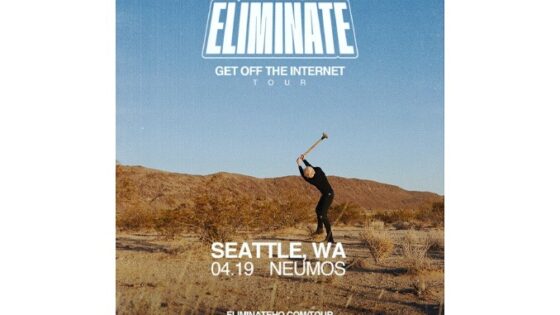

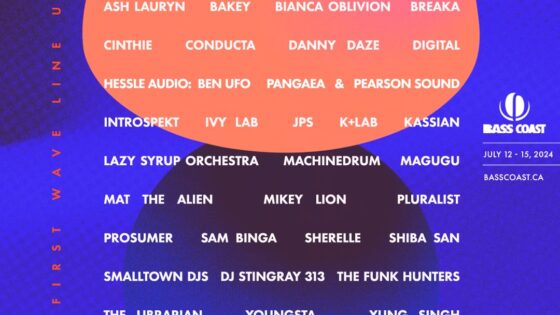

Important things happen in Pacific Northwest nightlife, and DMNW will send you alerts!